diff --git a/.gitmodules b/.gitmodules

index 7e27969..c0ebaa3 100644

--- a/.gitmodules

+++ b/.gitmodules

@@ -4,3 +4,6 @@

[submodule "teleop/robot_control/dex-retargeting"]

path = teleop/robot_control/dex-retargeting

url = https://github.com/silencht/dex-retargeting

+[submodule "teleop/teleimager"]

+ path = teleop/teleimager

+ url = https://github.com/silencht/teleimager.git

diff --git a/CHANGELOG.md b/CHANGELOG.md

index b0b2596..8abc4f8 100644

--- a/CHANGELOG.md

+++ b/CHANGELOG.md

@@ -1,5 +1,19 @@

# 🔖 Release Note

+## 🏷️ v1.4

+

+- The **image server** has been changed to [teleimager](https://github.com/silencht/teleimager). Please refer to the repository README for details.

+

+- [televuer](https://github.com/silencht/televuer) has been upgraded. Please see the repository README for details.

+

+ > The new versions of [teleimager](https://github.com/silencht/teleimager/commit/ab5018691943433c24af4c9a7f3ea0c9a6fbaf3c) + [televuer](https://github.com/silencht/televuer/releases/tag/v3.0) support transmitting head camera images via **WebRTC**.

+

+- Enriched the task information parameters in **recording mode**, fixing and improving EpisodeWriter.

+- Improved the system’s **state machine** information and IPC mode.

+- Added **pass-through mode**, allowing direct viewing of the external environment through a VR device camera (without using the robot’s head camera).

+- Added **CPU affinity mode**. If you are not familiar with this mode, you can ignore it.

+- Added **motion-switcher** functionality, allowing automatic debug mode entry and exit without using a remote controller.

+

## 🏷️ v1.3

- add [](https://github.com/unitreerobotics/xr_teleoperate/wiki) [](https://discord.gg/ZwcVwxv5rq)

diff --git a/CHANGELOG_zh-CN.md b/CHANGELOG_zh-CN.md

index aa75c40..2a1a17c 100644

--- a/CHANGELOG_zh-CN.md

+++ b/CHANGELOG_zh-CN.md

@@ -1,5 +1,25 @@

# 🔖 版本说明

+## 🏷️ v1.4

+

+- **图像服务器**变更为 [teleimager](https://github.com/silencht/teleimager),具体请查看仓库README。

+

+- 升级 [televuer](https://github.com/silencht/televuer),具体请查看仓库README。

+

+ > 新版本的 [teleimager](https://github.com/silencht/teleimager/commit/ab5018691943433c24af4c9a7f3ea0c9a6fbaf3c) + [televuer](https://github.com/silencht/televuer/releases/tag/v3.0) 支持通过 **webrtc** 传输头部相机图像

+ >

+ > 支持 pass-through, ego, immersive 三种模式:pass-through 为通透模式,直接通过 VR 相机查看现实世界来观察机器人;ego 是在通透模式的基础上,添加一个机器人视角的小窗;immersive 是完全沉浸机器人第一人称视角模式。

+

+- 丰富**录制模式**下的任务信息传递参数,修复和完善 EpisodeWriter。

+

+- 完善系统的**状态机信息**、IPC模式。

+

+- 新增 **affinity CPU 亲和模式**,如果你不了解该模式,那么请无视它。

+

+- 新增 **motion-switcher 功能**,无需遥控器即可自动进退 debug 模式。

+

+- 支持 **inspire_FTP** 灵巧手

+

## 🏷️ v1.3

- 添加 [](https://github.com/unitreerobotics/xr_teleoperate/wiki) [](https://discord.gg/ZwcVwxv5rq)

diff --git a/README.md b/README.md

index 45569f1..1f01578 100644

--- a/README.md

+++ b/README.md

@@ -36,15 +36,20 @@

# 🔖[Release Note](CHANGELOG.md)

-## 🏷️ v1.3

+## 🏷️ v1.4

-- add [](https://github.com/unitreerobotics/xr_teleoperate/wiki) [](https://discord.gg/ZwcVwxv5rq)

+- The **image server** has been changed to [teleimager](https://github.com/silencht/teleimager). Please refer to the repository README for details.

-- Support **IPC mode**, defaulting to use SSHKeyboard for input control.

-- Merged motion mode support for H1_2 robot.

-- Merged motion mode support for the G1_23 robot arm.

+- Upgraded [televuer](https://github.com/silencht/televuer). Please see the repository README for details.

-- ···

+ > The new versions of [teleimager](https://github.com/silencht/teleimager/commit/ab5018691943433c24af4c9a7f3ea0c9a6fbaf3c) + [televuer](https://github.com/silencht/televuer/releases/tag/v3.0) support transmitting **head camera images via WebRTC**.

+ > Supports **pass-through**, **ego**, and **immersive** modes.

+

+- Improved the system’s **state machine** information and IPC mode.

+

+- Added support for **Inspire_FTP dexterous hand**.

+

+- …

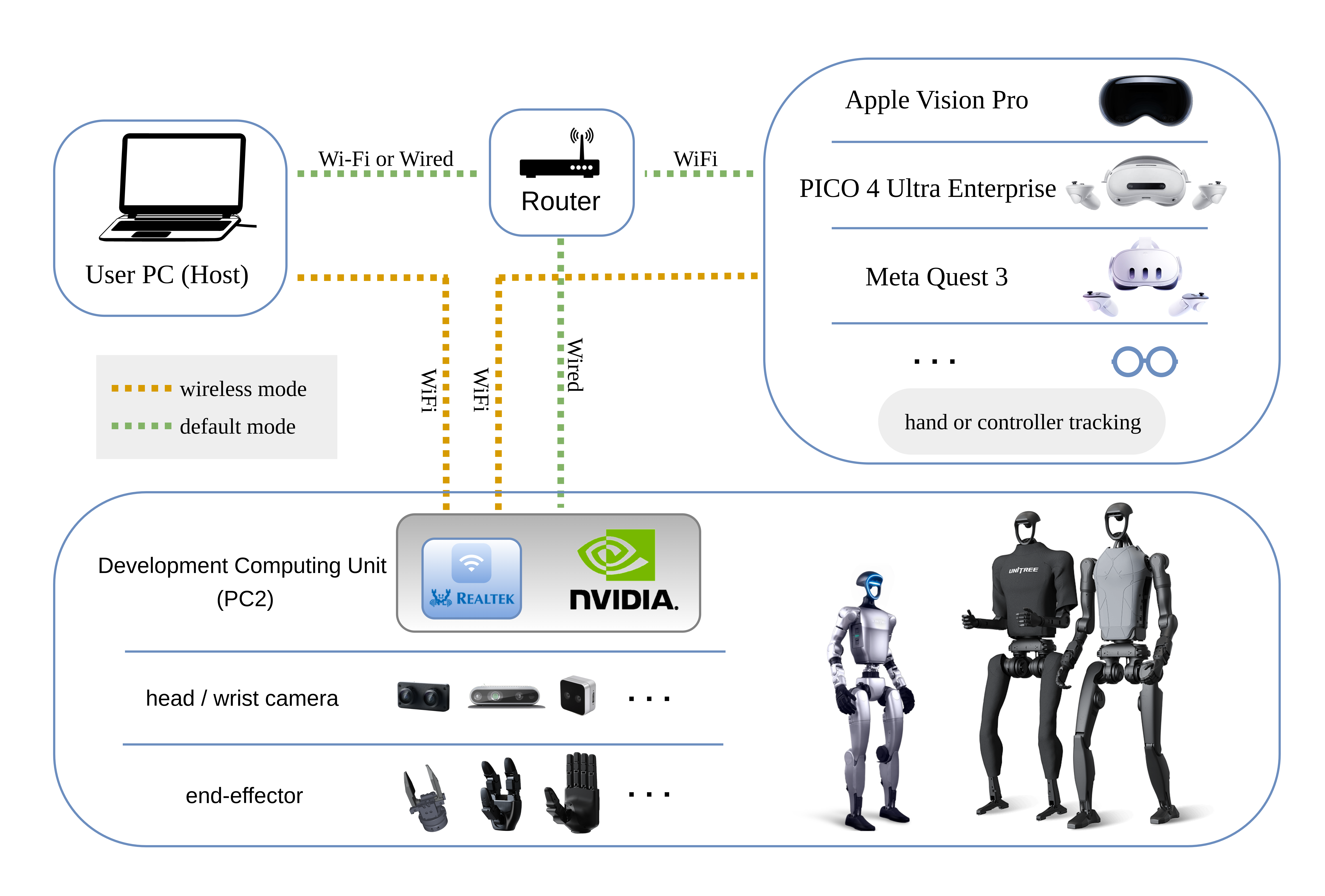

# 0. 📖 Introduction

@@ -56,8 +61,8 @@ Additionally, the [Wiki of this repo](https://github.com/unitreerobotics/xr_tele

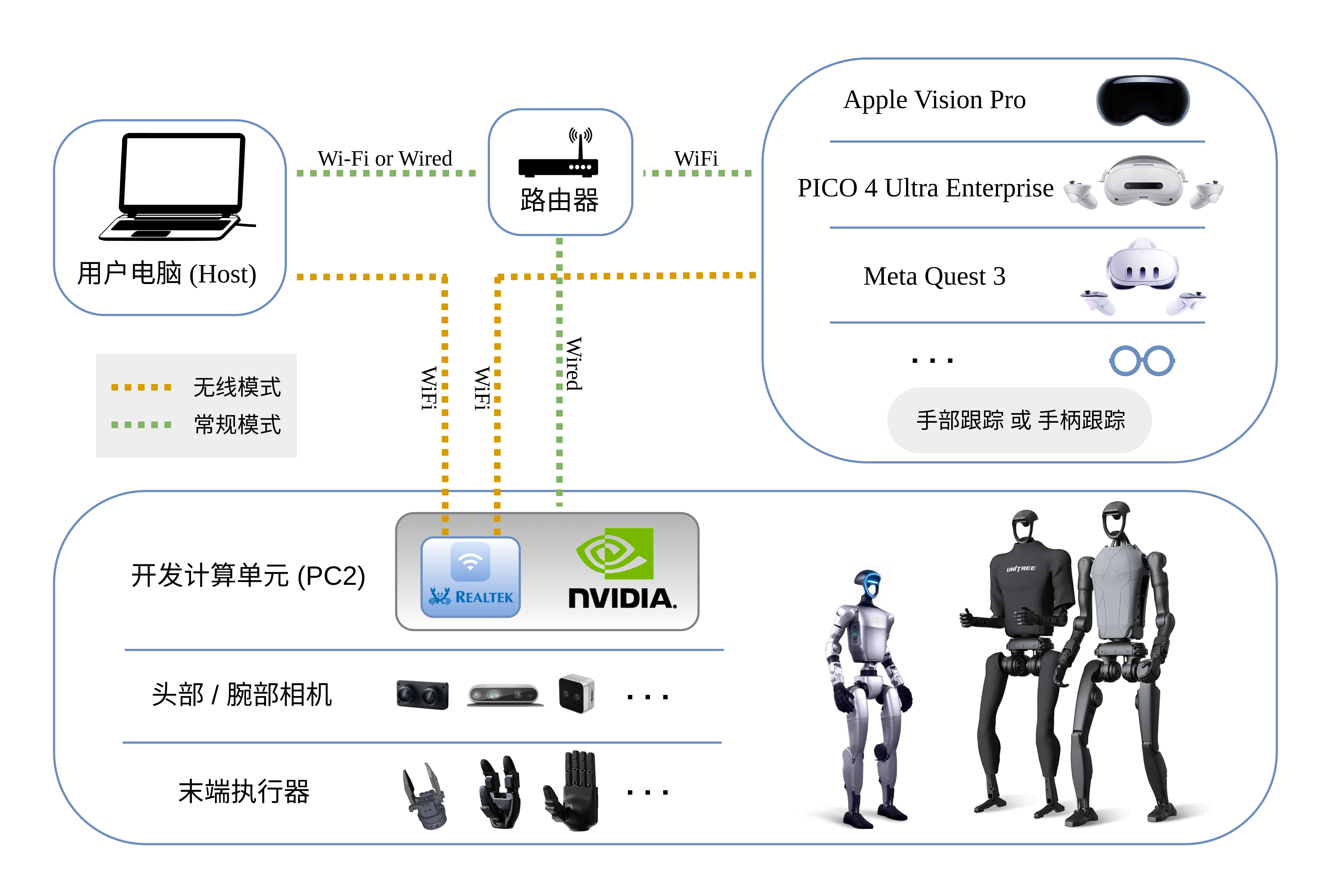

Here are the required devices and wiring diagram,

-

-  +

+

+

+

@@ -126,19 +131,55 @@ For more information, you can refer to [Official Documentation ](https://support

(tv) unitree@Host:~$ cd xr_teleoperate

# Shallow clone submodule

(tv) unitree@Host:~/xr_teleoperate$ git submodule update --init --depth 1

+```

+

+```bash

+# Install teleimager submodule

+(tv) unitree@Host:~/xr_teleoperate$ cd teleop/teleimager

+(tv) unitree@Host:~/xr_teleoperate/teleop/teleimager$ pip install -e . --no-deps

+```

+

+```bash

# Install televuer submodule

(tv) unitree@Host:~/xr_teleoperate$ cd teleop/televuer

(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ pip install -e .

-# Generate the certificate files required for televuer submodule

+

+# Configure SSL certificates for the televuer module so that XR devices (e.g., Pico / Quest / Apple Vision Pro) can securely connect via HTTPS / WebRTC

+# 1. Generate certificate files

+# 1.1 For Pico / Quest XR devices

(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ openssl req -x509 -nodes -days 365 -newkey rsa:2048 -keyout key.pem -out cert.pem

-# Install dex-retargeting submodule

-(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ cd ../robot_control/dex-retargeting/

-(tv) unitree@Host:~/xr_teleoperate/teleop/robot_control/dex-retargeting$ pip install -e .

-# Install additional dependencies required by this repo

-(tv) unitree@Host:~/xr_teleoperate/teleop/robot_control/dex-retargeting$ cd ../../../

-(tv) unitree@Host:~/xr_teleoperate$ pip install -r requirements.txt

+# 1.2 For Apple Vision Pro

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ openssl genrsa -out rootCA.key 2048

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ openssl req -x509 -new -nodes -key rootCA.key -sha256 -days 365 -out rootCA.pem -subj "/CN=xr-teleoperate"

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ openssl genrsa -out key.pem 2048

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ openssl req -new -key key.pem -out server.csr -subj "/CN=localhost"

+# Create server_ext.cnf file with the following content (IP.2 should match your host IP, e.g., 192.168.123.2. Use ifconfig or similar to check)

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ vim server_ext.cnf

+subjectAltName = @alt_names

+[alt_names]

+DNS.1 = localhost

+IP.1 = 192.168.123.164

+IP.2 = 192.168.123.2

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ openssl x509 -req -in server.csr -CA rootCA.pem -CAkey rootCA.key -CAcreateserial -out cert.pem -days 365 -sha256 -extfile server_ext.cnf

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ ls

+build cert.pem key.pem LICENSE pyproject.toml README.md rootCA.key rootCA.pem rootCA.srl server.csr server_ext.cnf src test

+# Copy rootCA.pem to Apple Vision Pro via AirDrop and install it

+

+# Enable firewall

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ sudo ufw allow 8012

+

+# 2. Configure certificate paths, choose one method

+# 2.1 User config directory (optional)

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ mkdir -p ~/.config/xr_teleoperate/

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ cp cert.pem key.pem ~/.config/xr_teleoperate/

+# 2.2 Environment variables (optional)

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ echo 'export XR_TELEOP_CERT="$HOME/xr_teleoperate/teleop/televuer/cert.pem"' >> ~/.bashrc

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ echo 'export XR_TELEOP_KEY="$HOME/xr_teleoperate/teleop/televuer/key.pem"' >> ~/.bashrc

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ source ~/.bashrc

```

+

+

## 1.2 🕹️ unitree_sdk2_python

```bash

@@ -165,10 +206,41 @@ For more information, you can refer to [Official Documentation ](https://support

>

> You can refer to [Harley Hahn's Guide to Unix and Linux](https://www.harley.com/unix-book/book/chapters/04.html#H) and [Conda User Guide](https://docs.conda.io/projects/conda/en/latest/user-guide/getting-started.html) to learn more.

+

+

+## 1.3 🚀 Launch Parameter Description

+

+- **Basic control parameters**

+

+| ⚙️ Parameter | 📜 Description | 🔘 Available Options | 📌 Default |

+| :---------------: | :----------------------------------------------------------: | :----------------------------------------------------------: | :---------------: |

+| `--frequency` | Set the FPS for recording and control | Any reasonable float value | 30.0 |

+| `--input-mode` | Choose XR input mode (how to control the robot) | `hand` (**hand tracking**)`controller` (**controller tracking**) | `hand` |

+| `--display-mode` | Choose XR display mode (how to view the robot perspective) | `immersive` (immersive)`ego` (pass-through + small first-person window)`pass-through` (pass-through only) | `immersive` |

+| `--arm` | Select the robot arm type (see 0. 📖 Introduction) | `G1_29``G1_23``H1_2``H1` | `G1_29` |

+| `--ee` | Select the end-effector type of the arm (see 0. 📖 Introduction) | `dex1``dex3``inspire_ftp``inspire_dfx``brainco` | None |

+| `--img-server-ip` | Set the image server IP address for receiving image streams and configuring WebRTC signaling | `IPv4` address | `192.168.123.164` |

+

+- **Mode switch parameters**

+

+| ⚙️ Parameter | 📜 Description |

+| :----------: | :----------------------------------------------------------: |

+| `--motion` | **Enable motion control mode**When enabled, the teleoperation program can run alongside the robot’s motion control program.In **hand tracking** mode, the [R3 controller](https://www.unitree.com/cn/R3) can be used to control normal robot walking; in **controller tracking** mode, joysticks can also control the robot’s movement. |

+| `--headless` | **Enable headless mode**For running the program on devices without a display, e.g., the Development Computing Unit (PC2). |

+| `--sim` | **Enable [simulation mode](https://github.com/unitreerobotics/unitree_sim_isaaclab)** |

+| `--ipc` | **Inter-process communication mode**Allows controlling the xr_teleoperate program’s state via IPC. Suitable for interaction with agent programs. |

+| `--affinity` | **CPU affinity mode**Set CPU core affinity. If you are unsure what this is, do not set it. |

+| `--record` | **Enable data recording mode**Press **r** to start teleoperation, then **s** to start recording; press **s** again to stop and save the episode. Press **s** repeatedly to repeat the process. |

+| `--task-*` | Configure the save path, target, description, and steps of the recorded task. |

+

+

+

# 2. 💻 Simulation Deployment

## 2.1 📥 Environment Setup

+> Since the image service has been upgraded to `teleimager`, the simulation deployment for v1.4 is temporarily unavailable. Please use v1.3 for testing for now.

+

First, install [unitree_sim_isaaclab](https://github.com/unitreerobotics/unitree_sim_isaaclab). Follow that repo’s README.

Then launch the simulation with a G1(29 DoF) and Dex3 hand configuration:

@@ -193,23 +265,6 @@ Here is the simulation GUI:

This program supports XR control of a physical robot or in simulation. Choose modes with command-line arguments:

-- **Basic control parameters**

-

-| ⚙️ Parameter | 📜 Description | 🔘 Options | 📌 Default |

-| :---------: | :-------------------------------------------: | :----------------------------------------------------------: | :-------: |

-| `--xr-mode` | Choose XR input mode | `hand` (**hand tracking**)

`controller` (**controller tracking**) | `hand` |

-| `--arm` | Choose robot arm type (see 0. 📖 Introduction) | `G1_29`

`G1_23`

`H1_2`

`H1` | `G1_29` |

-| `--ee` | Choose end-effector (see 0. 📖 Introduction) | `dex1`

`dex3`

`inspire1`

`brainco` | none |

-

-- **Mode flags**

-

-| ⚙️ Flag | 📜 Description |

-| :----------: | :----------------------------------------------------------: |

-| `--record` | Enable **data recording**

After pressing **r** to start, press **s** to start/stop saving an episode. Can repeat. |

-| `--motion` | Enable **motion mode**

After enabling this mode, the teleoperation program can run alongside the robot's motion control.

In **hand tracking** mode, you can use the [R3 Controller](https://www.unitree.com/cn/R3) to control the robot's walking behavior;

in **controller tracking** mode, you can also use [controllers to control the robot’s movement](https://github.com/unitreerobotics/xr_teleoperate/blob/375cdc27605de377c698e2b89cad0e5885724ca6/teleop/teleop_hand_and_arm.py#L247-L257). |

-| `--headless` | Run without GUI (for headless PC2 deployment) |

-| `--sim` | Enable **simulation mode** |

-

Assuming hand tracking with G1(29 DoF) + Dex3 in simulation with recording:

```bash

@@ -229,7 +284,20 @@ Next steps:

2. Connect to the corresponding Wi‑Fi

-3. Open a browser (e.g. Safari or PICO Browser) and go to: `https://192.168.123.2:8012?ws=wss://192.168.123.2:8012`

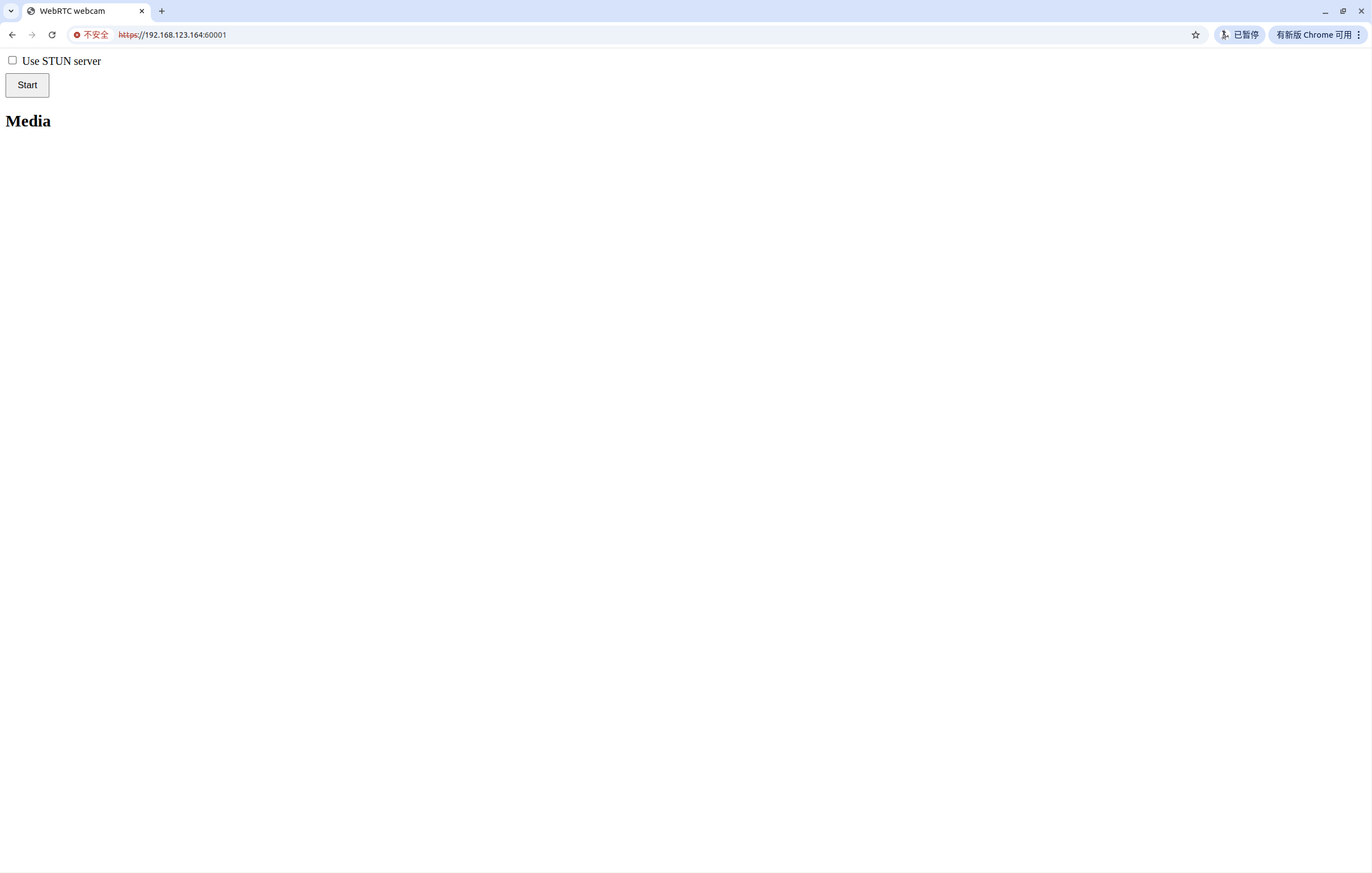

+3. Only proceed if your head camera has WebRTC enabled (`cam_config_server.yaml → head_camera → enable_webrtc: true`); otherwise jump to Step 4. Open a browser (e.g. Safari or PICO Browser) and go to:

+ **https://192.168.123.164:60001**

+

+ > **Note 1:** This IP is the address of **PC2**—the machine running teleimager service.

+ > **Note 2:** You may see a warning page like step 4. Click **Advanced**, then **Proceed to IP (unsafe)**. Once the page loads, press the **start** button in the top-left corner; if you see the head-camera preview, the check is successful.

+

+ > **Note 3:** This step serves two purposes:

+ >

+ > 1. Verify that the teleimager service is running correctly.

+ > 2. Manually trust the WebRTC self-signed certificate.

+ >

+ > Once this has been done on the same device with the same certificate, you can skip it on subsequent launches.

+

+4. Open a browser (e.g. Safari or PICO Browser) and go to: `https://192.168.123.2:8012/?ws=wss://192.168.123.2:8012`

> **Note 1**: This IP must match your **Host** IP (check with `ifconfig`).

>

@@ -241,11 +309,11 @@ Next steps:

-4. In the Vuer web, click **Virtual Reality**. Allow all prompts to start the VR session.

+5. In the Vuer web, click **Virtual Reality**. Allow all prompts to start the VR session.

-5. You’ll see the robot’s first-person view in the headset. The terminal prints connection info:

+6. You’ll see the robot’s first-person view in the headset. The terminal prints connection info:

```bash

websocket is connected. id:dbb8537d-a58c-4c57-b49d-cbb91bd25b90

@@ -253,19 +321,21 @@ Next steps:

Uplink task running. id:dbb8537d-a58c-4c57-b49d-cbb91bd25b90

```

-6. Align your arm to the **robot’s initial pose** to avoid sudden movements at start:

+7. Align your arm to the **robot’s initial pose** to avoid sudden movements at start:

-7. Press **r** in the terminal to begin teleoperation. You can now control the robot arm and dexterous hand.

+8. Press **r** in the terminal to begin teleoperation. You can now control the robot arm and dexterous hand.

-8. During teleoperation, press **s** to start recording; press **s** again to stop and save. Repeatable process.

+9. During teleoperation, press **s** to start recording; press **s** again to stop and save. Repeatable process.

> **Note 1**: Recorded data is stored in `xr_teleoperate/teleop/utils/data` by default, with usage instructions at this repo: [unitree_IL_lerobot](https://github.com/unitreerobotics/unitree_IL_lerobot/tree/main?tab=readme-ov-file#data-collection-and-conversion).

>

> **Note 2**: Please pay attention to your disk space size during data recording.

+>

+> **Note 3**: In v1.4 and above, the “record image” window has been removed.

## 2.3 🔚 Exit

@@ -281,34 +351,50 @@ Physical deployment steps are similar to simulation, with these key differences:

In the simulation environment, the image service is automatically enabled. For physical deployment, you need to manually start the image service based on your specific camera hardware. The steps are as follows:

-Copy `image_server.py` in the `xr_teleoperate/teleop/image_server` directory to the **Development Computing Unit PC2** of Unitree Robot (G1/H1/H1_2/etc.),

+1. Install the image service program on the **Development Computing Unit PC2** of the Unitree robot (G1/H1/H1_2, etc.)

-```bash

-# p.s. You can transfer image_server.py to PC2 via the scp command and then use ssh to remotely login to PC2 to execute it.

-# Assuming the IP address of the development computing unit PC2 is 192.168.123.164, the transmission process is as follows:

-# log in to PC2 via SSH and create the folder for the image server

-(tv) unitree@Host:~$ ssh unitree@192.168.123.164 "mkdir -p ~/image_server"

-# Copy the local image_server.py to the ~/image_server directory on PC2

-(tv) unitree@Host:~$ scp ~/xr_teleoperate/teleop/image_server/image_server.py unitree@192.168.123.164:~/image_server/

-```

+ ```bash

+ # SSH into PC2 and download the image service repository

+

+ (base) unitree@PC2:~$ cd ~

+ (base) unitree@PC2:~$ git clone https://github.com/silencht/teleimager

+

+ # Configure the environment according to the instructions in the teleimager repository README: https://github.com/silencht/teleimager/blob/main/README.md

+ ```

-and execute the following command **in the PC2**:

+2. On the **local host**, execute the following commands:

-```bash

-# p.s. Currently, this image transmission program supports two methods for reading images: OpenCV and Realsense SDK. Please refer to the comments in the `ImageServer` class within `image_server.py` to configure your image transmission service according to your camera hardware.

-# Now located in Unitree Robot PC2 terminal

-unitree@PC2:~/image_server$ python image_server.py

-# You can see the terminal output as follows:

-# {'fps': 30, 'head_camera_type': 'opencv', 'head_camera_image_shape': [480, 1280], 'head_camera_id_numbers': [0]}

-# [Image Server] Head camera 0 resolution: 480.0 x 1280.0

-# [Image Server] Image server has started, waiting for client connections...

-```

+ ```bash

+ # Copy the `key.pem` and `cert.pem` files configured in Section 1.1 from the **local host** `xr_teleoperate/teleop/televuer` directory to the corresponding path on PC2

+

+ # These two files are required by teleimager to start the WebRTC service

+ (tv) unitree@Host:~$ scp ~/xr_teleoperate/teleop/televuer/key.pem ~/xr_teleoperate/teleop/televuer/cert.pem unitree@192.168.123.164:~/teleimager

+

+ # On PC2, configure the certificate path according to the teleimager repository README, for example:

+ (teleimager) unitree@PC2:~$ cd teleimager

+ (teleimager) unitree@PC2:~$ mkdir -p ~/.config/xr_teleoperate/

+ (teleimager) unitree@PC2:~/teleimager$ cp cert.pem key.pem ~/.config/xr_teleoperate/

+ ```

-After image service is started, you can use `image_client.py` **in the Host** terminal to test whether the communication is successful:

+3. On the **development computing unit PC2**, configure `cam_config_server.yaml` according to the teleimager documentation and start the image service.

-```bash

-(tv) unitree@Host:~/xr_teleoperate/teleop/image_server$ python image_client.py

-```

+ ```bash

+ (teleimager) unitree@PC2:~/image_server$ python -m teleimager.image_server

+

+ # The following command works the same way

+ (teleimager) unitree@PC2:~/image_server$ teleimager-server

+ ```

+

+4. On the **local host**, execute the following command to subscribe to the images

+

+ ```bash

+ (tv) unitree@Host:~$ cd ~/xr_teleoperate/teleop/teleimager/src

+ (tv) unitree@Host:~/xr_teleoperate/teleop/teleimager/src$ python -m teleimager.image_client --host 192.168.123.164

+

+ # If the WebRTC image stream is set up, you can also open the URL [https://192.168.123.164:60001](https://192.168.123.164:60001) in a browser and click the Start button to test.

+ ```

+

+

## 3.2 ✋ Inspire Hand Service (optional)

@@ -316,7 +402,7 @@ After image service is started, you can use `image_client.py` **in the Host** te

>

> **Note 2**: For G1 robot with [Inspire DFX hand](https://support.unitree.com/home/zh/G1_developer/inspire_dfx_dexterous_hand), related issue [#46](https://github.com/unitreerobotics/xr_teleoperate/issues/46).

>

-> **Note 3**: For [Inspire FTP hand]((https://support.unitree.com/home/zh/G1_developer/inspire_ftp_dexterity_hand)), related issue [#48](https://github.com/unitreerobotics/xr_teleoperate/issues/48).

+> **Note 3**: For [Inspire FTP hand]((https://support.unitree.com/home/zh/G1_developer/inspire_ftp_dexterity_hand)), related issue [#48](https://github.com/unitreerobotics/xr_teleoperate/issues/48). FTP dexterous hand is now supported. Please refer to the `--ee` parameter for configuration.

First, use [this URL: DFX_inspire_service](https://github.com/unitreerobotics/DFX_inspire_service) to clone the dexterous hand control interface program. And Copy it to **PC2** of Unitree robots.

@@ -340,6 +426,8 @@ unitree@PC2:~/DFX_inspire_service/build$ ./hand_example

If two hands open and close continuously, it indicates success. Once successful, close the `./hand_example` program in Terminal 2.

+

+

## 3.3 ✋ BrainCo Hand Service (Optional)

Please refer to the [official documentation](https://support.unitree.com/home/en/G1_developer/brainco_hand) for setup instructions.

@@ -359,11 +447,10 @@ sudo ./brainco_hand --id 127 --serial /dev/ttyUSB2

>

>

-> 1. Everyone must keep a safe distance from the robot to prevent any potential danger!

-> 2. Please make sure to read the [Official Documentation](https://support.unitree.com/home/zh/Teleoperation) at least once before running this program.

-> 3. Without `--motion`, always make sure that the robot has entered [debug mode (L2+R2)](https://support.unitree.com/home/zh/H1_developer/Remote_control) to stop the motion control program, this will avoid potential command conflict problems.

-> 4. To use motion mode (with `--motion`), ensure the robot is in control mode (via [R3 remote](https://www.unitree.com/R3)).

-> 5. In motion mode:

+> 1. Everyone must keep a safe distance from the robot to prevent any potential danger!

+> 2. Please make sure to read the [Official Documentation](https://support.unitree.com/home/zh/Teleoperation) at least once before running this program.

+> 3. To use motion mode (with `--motion`), ensure the robot is in control mode (via [R3 remote](https://www.unitree.com/R3)).

+> 5. In motion mode:

> - Right controller **A** = Exit teleop

> - Both joysticks pressed = soft emergency stop (switch to damping mode)

> - Left joystick = drive directions;

@@ -391,37 +478,37 @@ Same as simulation but follow the safety warnings above.

```

xr_teleoperate/

│

-├── assets [Storage of robot URDF-related files]

-│

-├── hardware [3D‑printed hardware modules]

+├── assets [Stores robot URDF-related files]

│

├── teleop

-│ ├── image_server

-│ │ ├── image_client.py [Used to receive image data from the robot image server]

-│ │ ├── image_server.py [Capture images from cameras and send via network (Running on robot's Development Computing Unit PC2)]

+│ ├── teleimager [New image service library, supporting multiple features]

│ │

│ ├── televuer

│ │ ├── src/televuer

-│ │ ├── television.py [captures XR devices's head, wrist, hand/controller data]

+│ │ ├── television.py [Captures head, wrist, and hand/controller data from XR devices using Vuer]

│ │ ├── tv_wrapper.py [Post-processing of captured data]

│ │ ├── test

-│ │ ├── _test_television.py [test for television.py]

-│ │ ├── _test_tv_wrapper.py [test for tv_wrapper.py]

+│ │ ├── _test_television.py [Test program for television.py]

+│ │ ├── _test_tv_wrapper.py [Test program for tv_wrapper.py]

│ │

│ ├── robot_control

│ │ ├── src/dex-retargeting [Dexterous hand retargeting algorithm library]

-│ │ ├── robot_arm_ik.py [Inverse kinematics of the arm]

-│ │ ├── robot_arm.py [Control dual arm joints and lock the others]

-│ │ ├── hand_retargeting.py [Dexterous hand retargeting algorithm library Wrapper]

-│ │ ├── robot_hand_inspire.py [Control inspire hand joints]

-│ │ ├── robot_hand_unitree.py [Control unitree hand joints]

+│ │ ├── robot_arm_ik.py [Inverse kinematics for the arm]

+│ │ ├── robot_arm.py [Controls dual-arm joints and locks other parts]

+│ │ ├── hand_retargeting.py [Wrapper for the dexterous hand retargeting library]

+│ │ ├── robot_hand_inspire.py [Controls Inspire dexterous hand]

+│ │ ├── robot_hand_unitree.py [Controls Unitree dexterous hand]

│ │

│ ├── utils

│ │ ├── episode_writer.py [Used to record data for imitation learning]

-│ │ ├── weighted_moving_filter.py [For filtering joint data]

-│ │ ├── rerun_visualizer.py [For visualizing data during recording]

+│ │ ├── weighted_moving_filter.py [Filter for joint data]

+│ │ ├── rerun_visualizer.py [Visualizes recorded data]

+│ │ ├── ipc.py [Handles inter-process communication with proxy programs]

+│ │ ├── motion_switcher.py [Switches motion control states]

+│ │ ├── sim_state_topic.py [For simulation deployment]

│ │

-│ └── teleop_hand_and_arm.py [Startup execution code for teleoperation]

+│ └── teleop_hand_and_arm.py [Startup script for teleoperation]

+

```

# 5. 🛠️ Hardware

@@ -443,3 +530,4 @@ This code builds upon following open-source code-bases. Please visit the URLs to

7. https://github.com/zeromq/pyzmq

8. https://github.com/Dingry/BunnyVisionPro

9. https://github.com/unitreerobotics/unitree_sdk2_python

+10. https://github.com/ARCLab-MIT/beavr-bot

diff --git a/README_zh-CN.md b/README_zh-CN.md

index 87a0e37..c10db26 100644

--- a/README_zh-CN.md

+++ b/README_zh-CN.md

@@ -36,13 +36,19 @@

# 🔖 [版本说明](CHANGELOG_zh-CN.md)

-## 🏷️ v1.3

+## 🏷️ v1.4

-- 添加 [](https://github.com/unitreerobotics/xr_teleoperate/wiki) [](https://discord.gg/ZwcVwxv5rq)

+- **图像服务器**变更为 [teleimager](https://github.com/silencht/teleimager),具体请查看仓库README。

-- 支持 **IPC 模式**,默认使用 SSHKeyboard 进行输入控制。

-- 合并 **H1_2** 机器人新增运动模式支持。

-- 合并 **G1_23** 机械臂新增运动模式支持。

+- 升级 [televuer](https://github.com/silencht/televuer),具体请查看仓库README。

+

+ > 新版本的 [teleimager](https://github.com/silencht/teleimager/commit/ab5018691943433c24af4c9a7f3ea0c9a6fbaf3c) + [televuer](https://github.com/silencht/televuer/releases/tag/v3.0) 支持通过 webrtc 传输头部相机图像

+ >

+ > 支持 pass-through, ego, immersive 三种模式

+

+- 完善系统的**状态机**信息、IPC模式。

+

+- 支持 **inspire_FTP** 灵巧手。

- ···

@@ -57,11 +63,12 @@

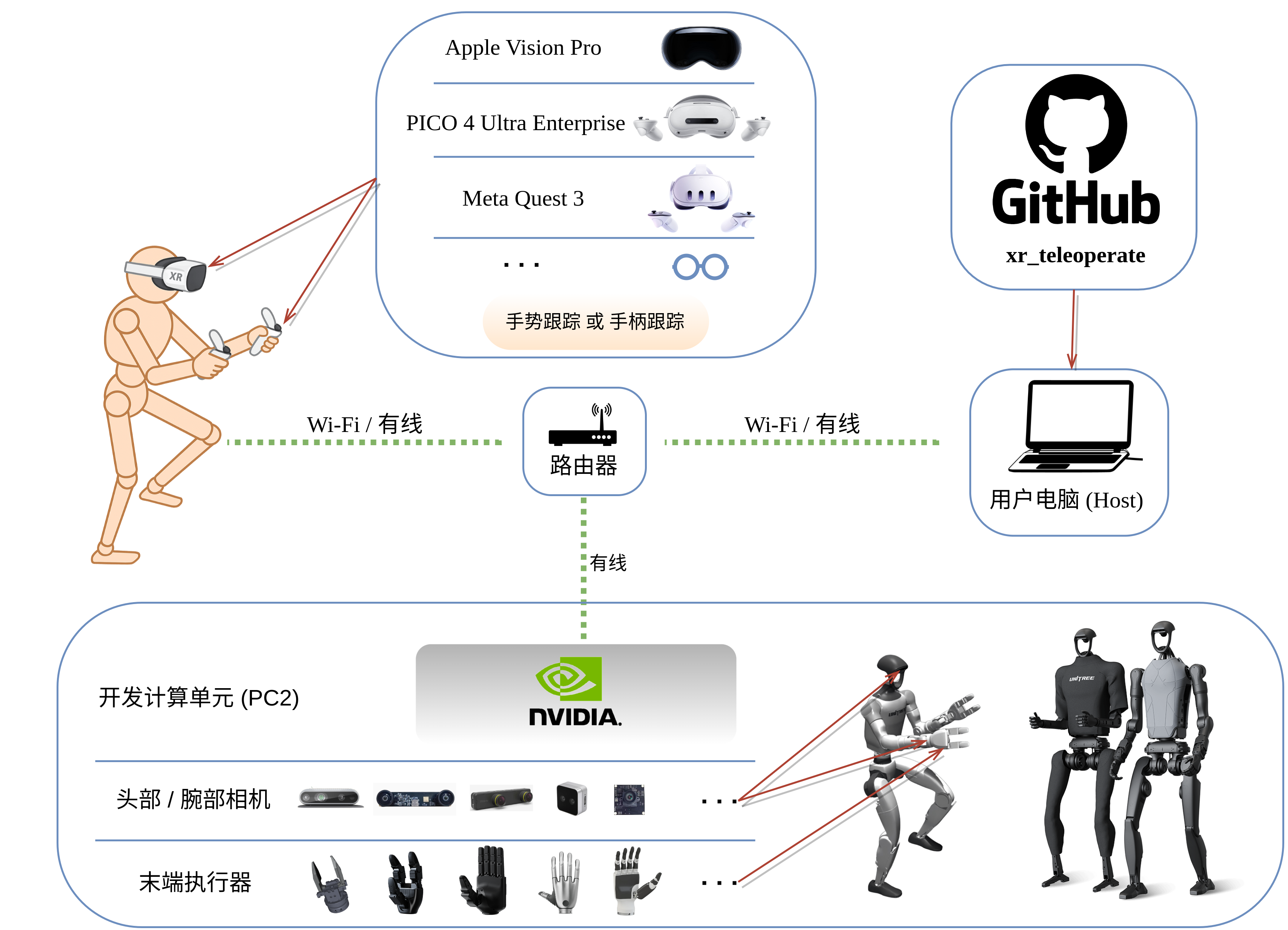

以下是系统示意图:

-

-  +

+

+

+

+

以下是本仓库目前支持的设备类型:

@@ -126,14 +133,59 @@

(tv) unitree@Host:~$ cd xr_teleoperate

# 浅克隆子模块

(tv) unitree@Host:~/xr_teleoperate$ git submodule update --init --depth 1

+```

+

+```bash

+# 安装 teleimager 模块

+(tv) unitree@Host:~/xr_teleoperate$ cd teleop/teleimager

+(tv) unitree@Host:~/xr_teleoperate/teleop/teleimager$ pip install -e . --no-deps

+```

+

+```bash

# 安装 televuer 模块

(tv) unitree@Host:~/xr_teleoperate$ cd teleop/televuer

(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ pip install -e .

-# 生成 televuer 模块所需的证书文件

+# 为 televuer 模块配置 SSL 证书,以便 XR 设备(如 Pico / Quest / Apple Vision Pro)通过 HTTPS / WebRTC 安全连接

+# 1. 生成证书文件

+# 1.1 如果您使用 pico / quest 等 xr 设备

(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ openssl req -x509 -nodes -days 365 -newkey rsa:2048 -keyout key.pem -out cert.pem

+# 1.2 如果您使用 apple vision pro 设备

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ openssl genrsa -out rootCA.key 2048

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ openssl req -x509 -new -nodes -key rootCA.key -sha256 -days 365 -out rootCA.pem -subj "/CN=xr-teleoperate"

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ openssl genrsa -out key.pem 2048

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ openssl req -new -key key.pem -out server.csr -subj "/CN=localhost"

+ ## 创建 server_ext.cnf 文件,输入以下内容(IP.2 地址应与您的 主机 IP 地址匹配,假设此处地址为 192.168.123.2。可以使用 `ifconfig` 等类似命令查询)

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ vim server_ext.cnf

+subjectAltName = @alt_names

+[alt_names]

+DNS.1 = localhost

+IP.1 = 192.168.123.164

+IP.2 = 192.168.123.2

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ openssl x509 -req -in server.csr -CA rootCA.pem -CAkey rootCA.key -CAcreateserial -out cert.pem -days 365 -sha256 -extfile server_ext.cnf

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ ls

+build cert.pem key.pem LICENSE pyproject.toml README.md rootCA.key rootCA.pem rootCA.srl server.csr server_ext.cnf src test

+# 通过 AirDrop 将 rootCA.pem 复制到 Apple Vision Pro 并安装它

+

+# 开启防火墙

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ sudo ufw allow 8012

+

+# 2. 配置证书路径,以下方式任选其一

+# 2.1 用户配置目录(可选)

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ mkdir -p ~/.config/xr_teleoperate/

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ cp cert.pem key.pem ~/.config/xr_teleoperate/

+# 2.2 环境变量配置(可选)

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ echo 'export XR_TELEOP_CERT="$HOME/xr_teleoperate/teleop/televuer/cert.pem"' >> ~/.bashrc

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ echo 'export XR_TELEOP_KEY="$HOME/xr_teleoperate/teleop/televuer/key.pem"' >> ~/.bashrc

+(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ source ~/.bashrc

+```

+

+```bash

# 安装 dex-retargeting 模块

(tv) unitree@Host:~/xr_teleoperate/teleop/televuer$ cd ../robot_control/dex-retargeting/

(tv) unitree@Host:~/xr_teleoperate/teleop/robot_control/dex-retargeting$ pip install -e .

+```

+

+```bash

# 安装本仓库所需的其他依赖库

(tv) unitree@Host:~/xr_teleoperate/teleop/robot_control/dex-retargeting$ cd ../../../

(tv) unitree@Host:~/xr_teleoperate$ pip install -r requirements.txt

@@ -168,12 +220,42 @@

>

> 您可以参考 [Harley Hahn's Guide to Unix and Linux](https://www.harley.com/unix-book/book/chapters/04.html#H) 和 [Conda User Guide](https://docs.conda.io/projects/conda/en/latest/user-guide/getting-started.html) 来深入了解这些知识。

+## 1.3 🚀 启动参数说明

+

+

+- 基础控制参数

+

+| ⚙️ 参数 | 📜 说明 | 🔘 目前可选值 | 📌 默认值 |

+| :---------------: | :----------------------------------------------------------: | :----------------------------------------------------------: | :---------------: |

+| `--frequency` | 设置录制和控制的 FPS | 任意正常范围内的浮点数 | 30.0 |

+| `--input-mode` | 选择 XR 输入模式(通过什么方式控制机器人) | `hand`(**手势跟踪**)

`controller`(**手柄跟踪**) | `hand` |

+| `--display-mode` | 选择 XR 显示模式(通过什么方式查看机器人视角) | `immersive`(沉浸式)

`ego`(通透+第一人称小窗)

`pass-through`(通透) | `immersive` |

+| `--arm` | 选择机器人设备类型(可参考 0. 📖 介绍) | `G1_29`

`G1_23`

`H1_2`

`H1` | `G1_29` |

+| `--ee` | 选择手臂的末端执行器设备类型(可参考 0. 📖 介绍) | `dex1`

`dex3`

`inspire_ftp`

`inspire_dfx`

`brainco` | 无默认值 |

+| `--img-server-ip` | 设置图像服务器的 IP 地址,用于接收图像服务流、配置 WebRTC 信令服务地址 | `IPv4` 地址 | `192.168.123.164` |

+

+- 模式开关参数

+

+| ⚙️ 参数 | 📜 说明 |

+| :----------: | :----------------------------------------------------------: |

+| `--motion` | 【启用**运动控制**模式】

开启本模式后,可在机器人运控程序运行下进行遥操作程序。

**手势跟踪**模式下,可使用 [R3遥控器](https://www.unitree.com/cn/R3) 控制机器人正常行走;**手柄跟踪**模式下,也可使用[手柄摇杆控制机器人行走](https://github.com/unitreerobotics/xr_teleoperate/blob/375cdc27605de377c698e2b89cad0e5885724ca6/teleop/teleop_hand_and_arm.py#L247-L257)。 |

+| `--headless` | 【启用**无图形界面**模式】

适用于本程序部署在开发计算单元(PC2)等无显示器情况 |

+| `--sim` | 【启用[**仿真模式**](https://github.com/unitreerobotics/unitree_sim_isaaclab)】 |

+| `--ipc` | 【进程间通信模式】

可通过进程间通信来控制 xr_teleoperate 程序的状态切换,此模式适合与代理程序进行交互 |

+| `--affinity` | 【CPU亲和模式】

设置 CPU 核心亲和性。如果你不知道这是什么,那么请不要设置它。 |

+| `--record` | 【启用**数据录制**模式】

按 **r** 键进入遥操后,按 **s** 键可开启数据录制,再次按 **s** 键可结束录制并保存本次 episode 数据。

继续按下 **s** 键可重复前述过程。 |

+| `--task-*` | 此类参数可配置录制的文件保存路径,任务目标、描述、步骤等信息 |

+

+------

+

# 2. 💻 仿真部署

## 2.1 📥 环境配置

+> 因为图像服务升级为`teleimager`,v1.4 版本仿真部署暂未上线,请暂时使用 v1.3 进行测试

+

首先,请安装 [unitree_sim_isaaclab](https://github.com/unitreerobotics/unitree_sim_isaaclab)。具体安装步骤,可参考该仓库 README 文档。

其次,启动 unitree_sim_isaaclab 仿真环境。假设使用 G1(29 DoF) 和 Dex3 灵巧手配置进行仿真,则启动命令示例如下:

@@ -204,28 +286,7 @@

本程序支持通过 XR 设备(比如手势或手柄)来控制实际机器人动作,也支持在虚拟仿真中运行。你可以根据需要,通过命令行参数来配置运行方式。

-以下是本程序的启动参数说明:

-

-- 基础控制参数

-

-| ⚙️ 参数 | 📜 说明 | 🔘 目前可选值 | 📌 默认值 |

-| :---------: | :----------------------------------------------: | :------------------------------------------------------: | :------: |

-| `--xr-mode` | 选择 XR 输入模式(通过什么方式控制机器人) | `hand`(**手势跟踪**)

`controller`(**手柄跟踪**) | `hand` |

-| `--arm` | 选择机器人设备类型(可参考 0. 📖 介绍) | `G1_29`

`G1_23`

`H1_2`

`H1` | `G1_29` |

-| `--ee` | 选择手臂的末端执行器设备类型(可参考 0. 📖 介绍) | `dex1`

`dex3`

`inspire1`

`brainco` | 无默认值 |

-

-- 模式开关参数

-

-| ⚙️ 参数 | 📜 说明 |

-| :----------: | :----------------------------------------------------------: |

-| `--record` | 【启用**数据录制**模式】

按 **r** 键进入遥操后,按 **s** 键可开启数据录制,再次按 **s** 键可结束录制并保存本次 episode 数据。

继续按下 **s** 键可重复前述过程。 |

-| `--motion` | 【启用**运动控制**模式】

开启本模式后,可在机器人运控程序运行下进行遥操作程序。

**手势跟踪**模式下,可使用 [R3遥控器](https://www.unitree.com/cn/R3) 控制机器人正常行走;**手柄跟踪**模式下,也可使用[手柄摇杆控制机器人行走](https://github.com/unitreerobotics/xr_teleoperate/blob/375cdc27605de377c698e2b89cad0e5885724ca6/teleop/teleop_hand_and_arm.py#L247-L257)。 |

-| `--headless` | 【启用**无图形界面**模式】

适用于本程序部署在开发计算单元(PC2)等无显示器情况 |

-| `--sim` | 【启用[**仿真模式**](https://github.com/unitreerobotics/unitree_sim_isaaclab)】 |

-

-------

-

-根据上述参数说明以及仿真环境配置,我们假设选择**手势跟踪**来控制 G1(29 DoF) + Dex3 灵巧手设备,同时开启仿真模式和数据录制模式。

+根据 1.3 节参数说明以及仿真环境配置,我们假设选择**手势跟踪**来控制 G1(29 DoF) + Dex3 灵巧手设备,同时开启仿真模式和数据录制模式。

则启动命令如下所示:

@@ -250,7 +311,21 @@

2. 连接对应的 WiFi 热点

-3. 打开浏览器应用(比如 Safari 或 PICO Browser),输入并访问网址:https://192.168.123.2:8012?ws=wss://192.168.123.2:8012

+3. 如果您头部相机开启了WebRTC功能(`cam_config_server.yaml => head_camera => enable_webrtc: true`),那么执行此步骤,否则直接跳到第 4 步。打开浏览器应用(比如 Safari 或 PICO Browser),输入并访问网址:https://192.168.123.164:60001

+

+ > 注意1:此 IP 地址为开启teleimager图像服务的 PC2 设备 IP

+

+ > 注意2:此时可能弹出类似第4步相同的警告提示。请点击`Advanced`按钮后,继续点击 `Proceed to ip (unsafe)` 按钮,使用非安全方式继续登录WebRTC图像服务器。进入后,点击左上角`start`按钮,如果预览到头部相机图像,那么操作成功。

+ >

+ >

+ >

+ >  + >

+ >

+ >

+ >

+ >

+ > 注意3:此步骤目的有两个:一是检测头部相机服务是否正常;二是手动信任 `webrtc` 自签名证书。相同设备与自签名证书条件下执行一次本步骤后,再次启动时可跳过该步。

+

+4. 打开浏览器应用(比如 Safari 或 PICO Browser),输入并访问网址:https://192.168.123.2:8012/?ws=wss://192.168.123.2:8012

> 注意1:此 IP 地址应与您的 **主机** IP 地址匹配。该地址可以使用 `ifconfig` 等类似命令查询。

@@ -262,7 +337,7 @@

-4. 进入`Vuer`网页界面后,点击 **`Virtual Reality`** 按钮。在允许后续的所有对话框后,启动 VR 会话。界面如下图所示:

+5. 进入`Vuer`网页界面后,点击 **`Virtual Reality`** 按钮。在允许后续的所有对话框后,启动 VR 会话。界面如下图所示:

@@ -270,7 +345,7 @@

-5. 此时,您将会在 XR 头显设备中看到机器人的第一人称视野。同时,终端打印出链接建立的信息:

+6. 此时,您将会在 XR 头显设备中看到机器人的第一人称视野。同时,终端打印出链接建立的信息:

```bash

websocket is connected. id:dbb8537d-a58c-4c57-b49d-cbb91bd25b90

@@ -278,7 +353,7 @@

Uplink task running. id:dbb8537d-a58c-4c57-b49d-cbb91bd25b90

```

-6. 然后,将手臂形状摆放到与**机器人初始姿态**相接近的姿势。这一步是为了避免在实物部署时,初始位姿差距过大导致机器人产生过大的摆动。

+7. 然后,将手臂形状摆放到与**机器人初始姿态**相接近的姿势。这一步是为了避免在实物部署时,初始位姿差距过大导致机器人产生过大的摆动。

机器人初始姿态示意图如下:

@@ -288,9 +363,9 @@

-7. 最后,在终端中按下 **r** 键后,正式开启遥操作程序。此时,您可以远程控制机器人的手臂(和灵巧手)

+8. 最后,在终端中按下 **r** 键后,正式开启遥操作程序。此时,您可以远程控制机器人的手臂(和灵巧手)

-8. 在遥操过程中,按 **s** 键可开启数据录制,再次按 **s** 键可结束录制并保存数据(该过程可重复)

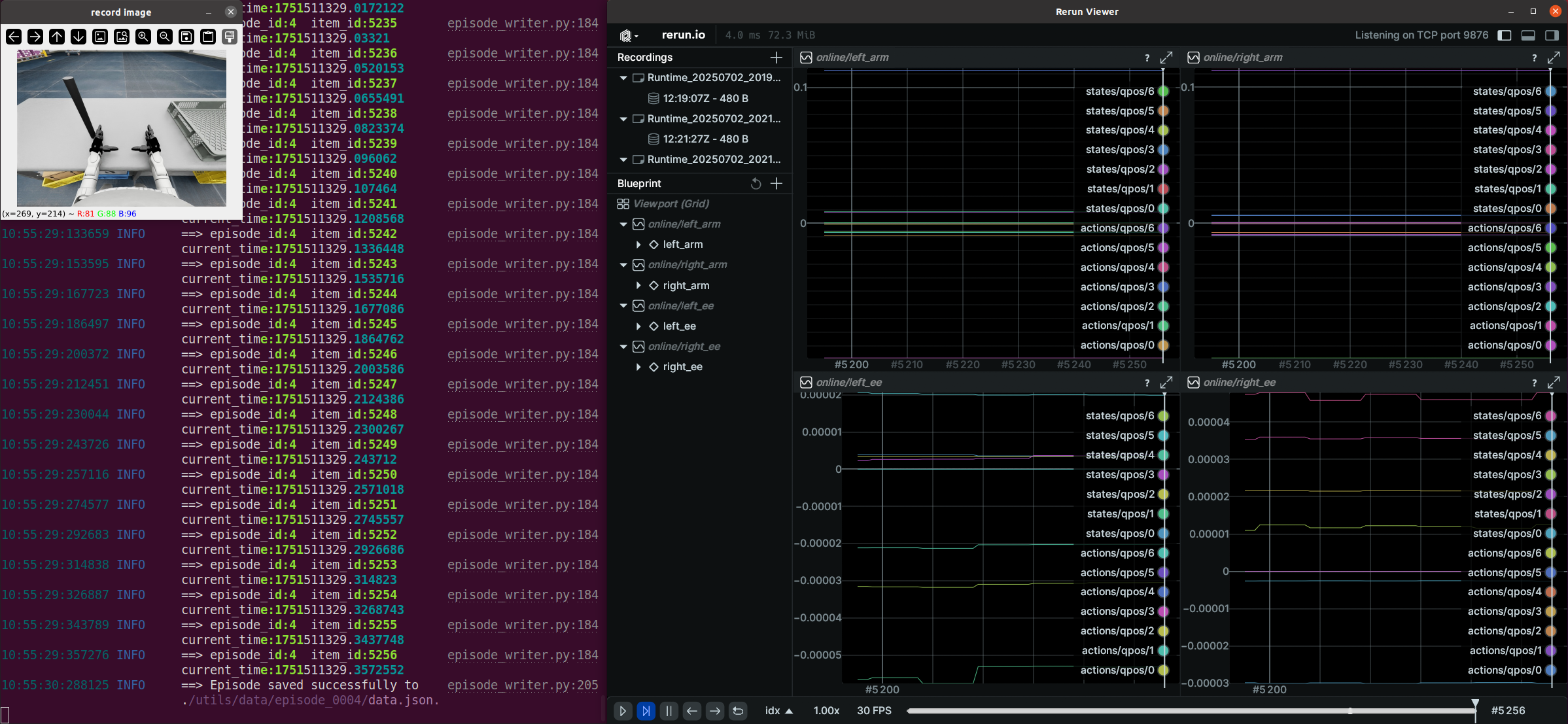

+9. 在遥操过程中,按 **s** 键可开启数据录制,再次按 **s** 键可结束录制并保存数据(该过程可重复)

数据录制过程示意图如下:

@@ -303,10 +378,12 @@

> 注意1:录制的数据默认存储在 `xr_teleoperate/teleop/utils/data` 中。数据使用说明见此仓库: [unitree_IL_lerobot](https://github.com/unitreerobotics/unitree_IL_lerobot/blob/main/README_zh.md#%E6%95%B0%E6%8D%AE%E9%87%87%E9%9B%86%E4%B8%8E%E8%BD%AC%E6%8D%A2)。

>

> 注意2:请在录制数据时注意您的硬盘空间大小。

+>

+> 注意3: v1.4 及以上版本,record image窗口取消。

## 2.3 🔚 退出

-要退出程序,可以在终端窗口(或 'record image' 窗口)中按下 **q** 键。

+要退出程序,可以在终端窗口中按下 **q** 键。

@@ -318,42 +395,52 @@

仿真环境中已经自动开启了图像服务。实物部署时,需要针对自身相机硬件类型,手动开启图像服务。步骤如下:

-将 `xr_teleoperate/teleop/image_server` 目录中的 `image_server.py` 复制到宇树机器人(G1/H1/H1_2 等)的 **开发计算单元 PC2**。

+1. 在宇树机器人(G1/H1/H1_2 等)的 **开发计算单元 PC2** 中安装图像服务程序

```bash

-# 提醒:可以通过scp命令将image_server.py传输到PC2,然后使用ssh远程登录PC2后执行它。

-# 假设开发计算单元PC2的ip地址为192.168.123.164,那么传输过程示例如下:

-# 先ssh登录PC2,创建图像服务器的文件夹

-(tv) unitree@Host:~$ ssh unitree@192.168.123.164 "mkdir -p ~/image_server"

-# 将本地的image_server.py拷贝至PC2的~/image_server目录下

-(tv) unitree@Host:~$ scp ~/xr_teleoperate/teleop/image_server/image_server.py unitree@192.168.123.164:~/image_server/

+# ssh登录PC2,下载图像服务程序仓库

+(base) unitree@PC2:~$ cd ~

+(base) unitree@PC2:~$ git clone https://github.com/silencht/teleimager

+# 根据 teleimager 仓库的 https://github.com/silencht/teleimager/blob/main/README.md 文档说明来配置环境

```

-并在 **PC2** 上执行以下命令:

+2. 在**本地主机**上执行以下命令:

```bash

-# 提醒:目前该图像传输程序支持OpenCV和Realsense SDK两种读取图像的方式,请阅读image_server.py的ImageServer类的注释以便您根据自己的相机硬件来配置自己的图像传输服务。

-# 现在位于宇树机器人 PC2 终端

-unitree@PC2:~/image_server$ python image_server.py

-# 您可以看到终端输出如下:

-# {'fps': 30, 'head_camera_type': 'opencv', 'head_camera_image_shape': [480, 1280], 'head_camera_id_numbers': [0]}

-# [Image Server] Head camera 0 resolution: 480.0 x 1280.0

-# [Image Server] Image server has started, waiting for client connections...

+# 将本地主机 xr_teleoperate/teleop/televuer 路径下在 1.1 节配置的 key.pem 和 cert.pem 文件拷贝到 PC2 对应路径

+# 这两个文件是 teleimager 启动 WebRTC 服务时所必须的

+(tv) unitree@Host:~$ scp ~/xr_teleoperate/teleop/televuer/key.pem ~/xr_teleoperate/teleop/televuer/cert.pem unitree@192.168.123.164:~/teleimager

+# 根据 teleimager 仓库的 https://github.com/silencht/teleimager/blob/main/README.md 文档说明,在PC2配置证书路径,例如

+(teleimager) unitree@PC2:~$ cd teleimager

+(teleimager) unitree@PC2:~$ mkdir -p ~/.config/xr_teleoperate/

+(teleimager) unitree@PC2:~/teleimager$ cp cert.pem key.pem ~/.config/xr_teleoperate/

```

-在图像服务启动后,您可以在 **主机** 终端上使用 `image_client.py` 测试通信是否成功:

+3. 在**开发计算单元 PC2** 中按照 teleimager 文档配置 cam_config_server.yaml 并启动图像服务程序

```bash

-(tv) unitree@Host:~/xr_teleoperate/teleop/image_server$ python image_client.py

+(teleimager) unitree@PC2:~/image_server$ python -m teleimager.image_server

+# 下面命令作用相同

+(teleimager) unitree@PC2:~/image_server$ teleimager-server

```

+4. 在**本地主机**上执行以下命令订阅图像:

+

+```bash

+(tv) unitree@Host:~$ cd ~/xr_teleoperate/teleop/teleimager/src

+(tv) unitree@Host:~/xr_teleoperate/teleop/teleimager/src$ python -m teleimager.image_client --host 192.168.123.164

+# 如果设置了 WebRTC 图像流,那么可以在浏览器中通过 https://192.168.123.164:60001 打开网址,随后点击 Start 按钮进行测试

+```

+

+

+

## 3.2 ✋ Inspire 手部服务(可选)

> 注意1:如果选择的机器人配置中没有使用 Inspire 系列灵巧手,那么请忽略本节内容。

>

> 注意2:如果选择的G1机器人配置,且使用 [Inspire DFX 灵巧手](https://support.unitree.com/home/zh/G1_developer/inspire_dfx_dexterous_hand),相关issue [#46](https://github.com/unitreerobotics/xr_teleoperate/issues/46)。

>

-> 注意3:如果选择的机器人配置中使用了 [Inspire FTP 灵巧手](https://support.unitree.com/home/zh/G1_developer/inspire_ftp_dexterity_hand),相关issue [ #48](https://github.com/unitreerobotics/xr_teleoperate/issues/48)。

+> 注意3:如果选择的机器人配置中使用了 [Inspire FTP 灵巧手](https://support.unitree.com/home/zh/G1_developer/inspire_ftp_dexterity_hand),相关issue [ #48](https://github.com/unitreerobotics/xr_teleoperate/issues/48)。目前已经支持 FTP 灵巧手,请您查阅 `--ee` 参数。

首先,使用 [此链接: DFX_inspire_service](https://github.com/unitreerobotics/DFX_inspire_service) 克隆灵巧手控制接口程序,然后将其复制到宇树机器人的**PC2**。

@@ -396,8 +483,7 @@ sudo ./brainco_hand --id 127 --serial /dev/ttyUSB2

>

> 1. 所有人员必须与机器人保持安全距离,以防止任何潜在的危险!

> 2. 在运行此程序之前,请确保至少阅读一次 [官方文档](https://support.unitree.com/home/zh/Teleoperation)。

-> 3. 没有开启**运动控制**模式(`--motion`)时,请务必确保机器人已经进入 [调试模式(L2+R2)](https://support.unitree.com/home/zh/G1_developer/remote_control),以停止运动控制程序发送指令,这样可以避免潜在的指令冲突问题。

-> 4. 如果要开启**运动控制**模式遥操作,请提前使用 [R3遥控器](https://www.unitree.com/cn/R3) 确保机器人进入主运控模式。

+> 3. 如果要开启**运动控制**模式遥操作,请提前使用 [R3遥控器](https://www.unitree.com/cn/R3) 确保机器人进入主运控模式。

> 5. 开启**运动控制**模式(`--motion`)时:

> - 右手柄按键 `A` 为遥操作**退出**功能按键;

> - 左手柄和右手柄的两个摇杆按键同时按下为软急停按键,机器人会退出运控程序并进入阻尼模式,该功能只在必要情况下使用

@@ -427,12 +513,8 @@ xr_teleoperate/

│

├── assets [存储机器人 URDF 相关文件]

│

-├── hardware [存储 3D 打印模组]

-│

├── teleop

-│ ├── image_server

-│ │ ├── image_client.py [用于从机器人图像服务器接收图像数据]

-│ │ ├── image_server.py [从摄像头捕获图像并通过网络发送(在机器人板载计算单元PC2上运行)]

+│ ├── teleimager [全新的图像服务库,支持多种特性]

│ │

│ ├── televuer

│ │ ├── src/televuer

@@ -454,6 +536,9 @@ xr_teleoperate/

│ │ ├── episode_writer.py [用于记录模仿学习的数据]

│ │ ├── weighted_moving_filter.py [用于过滤关节数据的滤波器]

│ │ ├── rerun_visualizer.py [用于可视化录制数据]

+│ │ ├── ipc.py [用于和代理程序进行进程间通信]

+│ │ ├── motion_switcher.py [用于切换运控状态]

+│ │ ├── sim_state_topic.py [用于仿真部署]

│ │

│ │──teleop_hand_and_arm.py [遥操作的启动执行代码]

```

@@ -477,3 +562,4 @@ xr_teleoperate/

7. https://github.com/zeromq/pyzmq

8. https://github.com/Dingry/BunnyVisionPro

9. https://github.com/unitreerobotics/unitree_sdk2_python

+10. https://github.com/ARCLab-MIT/beavr-bot

diff --git a/teleop/image_server/image_client.py b/teleop/image_server/image_client.py

deleted file mode 100644

index 9c42581..0000000

--- a/teleop/image_server/image_client.py

+++ /dev/null

@@ -1,197 +0,0 @@

-import cv2

-import zmq

-import numpy as np

-import time

-import struct

-from collections import deque

-from multiprocessing import shared_memory

-import logging_mp

-logger_mp = logging_mp.get_logger(__name__)

-

-class ImageClient:

- def __init__(self, tv_img_shape = None, tv_img_shm_name = None, wrist_img_shape = None, wrist_img_shm_name = None,

- image_show = False, server_address = "192.168.123.164", port = 5555, Unit_Test = False):

- """

- tv_img_shape: User's expected head camera resolution shape (H, W, C). It should match the output of the image service terminal.

-

- tv_img_shm_name: Shared memory is used to easily transfer images across processes to the Vuer.

-

- wrist_img_shape: User's expected wrist camera resolution shape (H, W, C). It should maintain the same shape as tv_img_shape.

-

- wrist_img_shm_name: Shared memory is used to easily transfer images.

-

- image_show: Whether to display received images in real time.

-

- server_address: The ip address to execute the image server script.

-

- port: The port number to bind to. It should be the same as the image server.

-

- Unit_Test: When both server and client are True, it can be used to test the image transfer latency, \

- network jitter, frame loss rate and other information.

- """

- self.running = True

- self._image_show = image_show

- self._server_address = server_address

- self._port = port

-

- self.tv_img_shape = tv_img_shape

- self.wrist_img_shape = wrist_img_shape

-

- self.tv_enable_shm = False

- if self.tv_img_shape is not None and tv_img_shm_name is not None:

- self.tv_image_shm = shared_memory.SharedMemory(name=tv_img_shm_name)

- self.tv_img_array = np.ndarray(tv_img_shape, dtype = np.uint8, buffer = self.tv_image_shm.buf)

- self.tv_enable_shm = True

-

- self.wrist_enable_shm = False

- if self.wrist_img_shape is not None and wrist_img_shm_name is not None:

- self.wrist_image_shm = shared_memory.SharedMemory(name=wrist_img_shm_name)

- self.wrist_img_array = np.ndarray(wrist_img_shape, dtype = np.uint8, buffer = self.wrist_image_shm.buf)

- self.wrist_enable_shm = True

-

- # Performance evaluation parameters

- self._enable_performance_eval = Unit_Test

- if self._enable_performance_eval:

- self._init_performance_metrics()

-

- def _init_performance_metrics(self):

- self._frame_count = 0 # Total frames received

- self._last_frame_id = -1 # Last received frame ID

-

- # Real-time FPS calculation using a time window

- self._time_window = 1.0 # Time window size (in seconds)

- self._frame_times = deque() # Timestamps of frames received within the time window

-

- # Data transmission quality metrics

- self._latencies = deque() # Latencies of frames within the time window

- self._lost_frames = 0 # Total lost frames

- self._total_frames = 0 # Expected total frames based on frame IDs

-

- def _update_performance_metrics(self, timestamp, frame_id, receive_time):

- # Update latency

- latency = receive_time - timestamp

- self._latencies.append(latency)

-

- # Remove latencies outside the time window

- while self._latencies and self._frame_times and self._latencies[0] < receive_time - self._time_window:

- self._latencies.popleft()

-

- # Update frame times

- self._frame_times.append(receive_time)

- # Remove timestamps outside the time window

- while self._frame_times and self._frame_times[0] < receive_time - self._time_window:

- self._frame_times.popleft()

-

- # Update frame counts for lost frame calculation

- expected_frame_id = self._last_frame_id + 1 if self._last_frame_id != -1 else frame_id

- if frame_id != expected_frame_id:

- lost = frame_id - expected_frame_id

- if lost < 0:

- logger_mp.info(f"[Image Client] Received out-of-order frame ID: {frame_id}")

- else:

- self._lost_frames += lost

- logger_mp.warning(f"[Image Client] Detected lost frames: {lost}, Expected frame ID: {expected_frame_id}, Received frame ID: {frame_id}")

- self._last_frame_id = frame_id

- self._total_frames = frame_id + 1

-

- self._frame_count += 1

-

- def _print_performance_metrics(self, receive_time):

- if self._frame_count % 30 == 0:

- # Calculate real-time FPS

- real_time_fps = len(self._frame_times) / self._time_window if self._time_window > 0 else 0

-

- # Calculate latency metrics

- if self._latencies:

- avg_latency = sum(self._latencies) / len(self._latencies)

- max_latency = max(self._latencies)

- min_latency = min(self._latencies)

- jitter = max_latency - min_latency

- else:

- avg_latency = max_latency = min_latency = jitter = 0

-

- # Calculate lost frame rate

- lost_frame_rate = (self._lost_frames / self._total_frames) * 100 if self._total_frames > 0 else 0

-

- logger_mp.info(f"[Image Client] Real-time FPS: {real_time_fps:.2f}, Avg Latency: {avg_latency*1000:.2f} ms, Max Latency: {max_latency*1000:.2f} ms, \

- Min Latency: {min_latency*1000:.2f} ms, Jitter: {jitter*1000:.2f} ms, Lost Frame Rate: {lost_frame_rate:.2f}%")

-

- def _close(self):

- self._socket.close()

- self._context.term()

- if self._image_show:

- cv2.destroyAllWindows()

- logger_mp.info("Image client has been closed.")

-

-

- def receive_process(self):

- # Set up ZeroMQ context and socket

- self._context = zmq.Context()

- self._socket = self._context.socket(zmq.SUB)

- self._socket.connect(f"tcp://{self._server_address}:{self._port}")

- self._socket.setsockopt_string(zmq.SUBSCRIBE, "")

-

- logger_mp.info("Image client has started, waiting to receive data...")

- try:

- while self.running:

- # Receive message

- message = self._socket.recv()

- receive_time = time.time()

-

- if self._enable_performance_eval:

- header_size = struct.calcsize('dI')

- try:

- # Attempt to extract header and image data

- header = message[:header_size]

- jpg_bytes = message[header_size:]

- timestamp, frame_id = struct.unpack('dI', header)

- except struct.error as e:

- logger_mp.warning(f"[Image Client] Error unpacking header: {e}, discarding message.")

- continue

- else:

- # No header, entire message is image data

- jpg_bytes = message

- # Decode image

- np_img = np.frombuffer(jpg_bytes, dtype=np.uint8)

- current_image = cv2.imdecode(np_img, cv2.IMREAD_COLOR)

- if current_image is None:

- logger_mp.warning("[Image Client] Failed to decode image.")

- continue

-

- if self.tv_enable_shm:

- np.copyto(self.tv_img_array, np.array(current_image[:, :self.tv_img_shape[1]]))

-

- if self.wrist_enable_shm:

- np.copyto(self.wrist_img_array, np.array(current_image[:, -self.wrist_img_shape[1]:]))

-

- if self._image_show:

- height, width = current_image.shape[:2]

- resized_image = cv2.resize(current_image, (width // 2, height // 2))

- cv2.imshow('Image Client Stream', resized_image)

- if cv2.waitKey(1) & 0xFF == ord('q'):

- self.running = False

-

- if self._enable_performance_eval:

- self._update_performance_metrics(timestamp, frame_id, receive_time)

- self._print_performance_metrics(receive_time)

-

- except KeyboardInterrupt:

- logger_mp.info("Image client interrupted by user.")

- except Exception as e:

- logger_mp.warning(f"[Image Client] An error occurred while receiving data: {e}")

- finally:

- self._close()

-

-if __name__ == "__main__":

- # example1

- # tv_img_shape = (480, 1280, 3)

- # img_shm = shared_memory.SharedMemory(create=True, size=np.prod(tv_img_shape) * np.uint8().itemsize)

- # img_array = np.ndarray(tv_img_shape, dtype=np.uint8, buffer=img_shm.buf)

- # img_client = ImageClient(tv_img_shape = tv_img_shape, tv_img_shm_name = img_shm.name)

- # img_client.receive_process()

-

- # example2

- # Initialize the client with performance evaluation enabled

- # client = ImageClient(image_show = True, server_address='127.0.0.1', Unit_Test=True) # local test

- client = ImageClient(image_show = True, server_address='192.168.123.164', Unit_Test=False) # deployment test

- client.receive_process()

\ No newline at end of file

diff --git a/teleop/image_server/image_server.py b/teleop/image_server/image_server.py

deleted file mode 100644

index 19799fb..0000000

--- a/teleop/image_server/image_server.py

+++ /dev/null

@@ -1,321 +0,0 @@

-import cv2

-import zmq

-import time

-import struct

-from collections import deque

-import numpy as np

-import pyrealsense2 as rs

-import logging_mp

-logger_mp = logging_mp.get_logger(__name__, level=logging_mp.DEBUG)

-

-

-class RealSenseCamera(object):

- def __init__(self, img_shape, fps, serial_number=None, enable_depth=False) -> None:

- """

- img_shape: [height, width]

- serial_number: serial number

- """

- self.img_shape = img_shape

- self.fps = fps

- self.serial_number = serial_number

- self.enable_depth = enable_depth

-

- align_to = rs.stream.color

- self.align = rs.align(align_to)

- self.init_realsense()

-

- def init_realsense(self):

-

- self.pipeline = rs.pipeline()

- config = rs.config()

- if self.serial_number is not None:

- config.enable_device(self.serial_number)

-

- config.enable_stream(rs.stream.color, self.img_shape[1], self.img_shape[0], rs.format.bgr8, self.fps)

-

- if self.enable_depth:

- config.enable_stream(rs.stream.depth, self.img_shape[1], self.img_shape[0], rs.format.z16, self.fps)

-

- profile = self.pipeline.start(config)

- self._device = profile.get_device()

- if self._device is None:

- logger_mp.error('[Image Server] pipe_profile.get_device() is None .')

- if self.enable_depth:

- assert self._device is not None

- depth_sensor = self._device.first_depth_sensor()

- self.g_depth_scale = depth_sensor.get_depth_scale()

-

- self.intrinsics = profile.get_stream(rs.stream.color).as_video_stream_profile().get_intrinsics()

-

- def get_frame(self):

- frames = self.pipeline.wait_for_frames()

- aligned_frames = self.align.process(frames)

- color_frame = aligned_frames.get_color_frame()

-

- if self.enable_depth:

- depth_frame = aligned_frames.get_depth_frame()

-

- if not color_frame:

- return None

-

- color_image = np.asanyarray(color_frame.get_data())

- # color_image = cv2.cvtColor(color_image, cv2.COLOR_BGR2RGB)

- depth_image = np.asanyarray(depth_frame.get_data()) if self.enable_depth else None

- return color_image, depth_image

-

- def release(self):

- self.pipeline.stop()

-

-

-class OpenCVCamera():

- def __init__(self, device_id, img_shape, fps):

- """

- decive_id: /dev/video* or *

- img_shape: [height, width]

- """

- self.id = device_id

- self.fps = fps

- self.img_shape = img_shape

- self.cap = cv2.VideoCapture(self.id, cv2.CAP_V4L2)

- self.cap.set(cv2.CAP_PROP_FOURCC, cv2.VideoWriter.fourcc('M', 'J', 'P', 'G'))

- self.cap.set(cv2.CAP_PROP_FRAME_HEIGHT, self.img_shape[0])

- self.cap.set(cv2.CAP_PROP_FRAME_WIDTH, self.img_shape[1])

- self.cap.set(cv2.CAP_PROP_FPS, self.fps)

-

- # Test if the camera can read frames

- if not self._can_read_frame():

- logger_mp.error(f"[Image Server] Camera {self.id} Error: Failed to initialize the camera or read frames. Exiting...")

- self.release()

-

- def _can_read_frame(self):

- success, _ = self.cap.read()

- return success

-

- def release(self):

- self.cap.release()

-

- def get_frame(self):

- ret, color_image = self.cap.read()

- if not ret:

- return None

- return color_image

-

-

-class ImageServer:

- def __init__(self, config, port = 5555, Unit_Test = False):

- """

- config example1:

- {

- 'fps':30 # frame per second

- 'head_camera_type': 'opencv', # opencv or realsense

- 'head_camera_image_shape': [480, 1280], # Head camera resolution [height, width]

- 'head_camera_id_numbers': [0], # '/dev/video0' (opencv)

- 'wrist_camera_type': 'realsense',

- 'wrist_camera_image_shape': [480, 640], # Wrist camera resolution [height, width]

- 'wrist_camera_id_numbers': ["218622271789", "241222076627"], # realsense camera's serial number

- }

-

- config example2:

- {

- 'fps':30 # frame per second

- 'head_camera_type': 'realsense', # opencv or realsense

- 'head_camera_image_shape': [480, 640], # Head camera resolution [height, width]

- 'head_camera_id_numbers': ["218622271739"], # realsense camera's serial number

- 'wrist_camera_type': 'opencv',

- 'wrist_camera_image_shape': [480, 640], # Wrist camera resolution [height, width]

- 'wrist_camera_id_numbers': [0,1], # '/dev/video0' and '/dev/video1' (opencv)

- }

-

- If you are not using the wrist camera, you can comment out its configuration, like this below:

- config:

- {

- 'fps':30 # frame per second

- 'head_camera_type': 'opencv', # opencv or realsense

- 'head_camera_image_shape': [480, 1280], # Head camera resolution [height, width]

- 'head_camera_id_numbers': [0], # '/dev/video0' (opencv)

- #'wrist_camera_type': 'realsense',

- #'wrist_camera_image_shape': [480, 640], # Wrist camera resolution [height, width]

- #'wrist_camera_id_numbers': ["218622271789", "241222076627"], # serial number (realsense)

- }

- """

- logger_mp.info(config)

- self.fps = config.get('fps', 30)

- self.head_camera_type = config.get('head_camera_type', 'opencv')

- self.head_image_shape = config.get('head_camera_image_shape', [480, 640]) # (height, width)

- self.head_camera_id_numbers = config.get('head_camera_id_numbers', [0])

-

- self.wrist_camera_type = config.get('wrist_camera_type', None)

- self.wrist_image_shape = config.get('wrist_camera_image_shape', [480, 640]) # (height, width)

- self.wrist_camera_id_numbers = config.get('wrist_camera_id_numbers', None)

-

- self.port = port

- self.Unit_Test = Unit_Test

-

-

- # Initialize head cameras

- self.head_cameras = []

- if self.head_camera_type == 'opencv':

- for device_id in self.head_camera_id_numbers:

- camera = OpenCVCamera(device_id=device_id, img_shape=self.head_image_shape, fps=self.fps)

- self.head_cameras.append(camera)

- elif self.head_camera_type == 'realsense':

- for serial_number in self.head_camera_id_numbers:

- camera = RealSenseCamera(img_shape=self.head_image_shape, fps=self.fps, serial_number=serial_number)

- self.head_cameras.append(camera)

- else:

- logger_mp.warning(f"[Image Server] Unsupported head_camera_type: {self.head_camera_type}")

-

- # Initialize wrist cameras if provided

- self.wrist_cameras = []

- if self.wrist_camera_type and self.wrist_camera_id_numbers:

- if self.wrist_camera_type == 'opencv':

- for device_id in self.wrist_camera_id_numbers:

- camera = OpenCVCamera(device_id=device_id, img_shape=self.wrist_image_shape, fps=self.fps)

- self.wrist_cameras.append(camera)

- elif self.wrist_camera_type == 'realsense':

- for serial_number in self.wrist_camera_id_numbers:

- camera = RealSenseCamera(img_shape=self.wrist_image_shape, fps=self.fps, serial_number=serial_number)

- self.wrist_cameras.append(camera)

- else:

- logger_mp.warning(f"[Image Server] Unsupported wrist_camera_type: {self.wrist_camera_type}")

-

- # Set ZeroMQ context and socket

- self.context = zmq.Context()

- self.socket = self.context.socket(zmq.PUB)

- self.socket.bind(f"tcp://*:{self.port}")

-

- if self.Unit_Test:

- self._init_performance_metrics()

-

- for cam in self.head_cameras:

- if isinstance(cam, OpenCVCamera):

- logger_mp.info(f"[Image Server] Head camera {cam.id} resolution: {cam.cap.get(cv2.CAP_PROP_FRAME_HEIGHT)} x {cam.cap.get(cv2.CAP_PROP_FRAME_WIDTH)}")

- elif isinstance(cam, RealSenseCamera):

- logger_mp.info(f"[Image Server] Head camera {cam.serial_number} resolution: {cam.img_shape[0]} x {cam.img_shape[1]}")

- else:

- logger_mp.warning("[Image Server] Unknown camera type in head_cameras.")

-

- for cam in self.wrist_cameras:

- if isinstance(cam, OpenCVCamera):

- logger_mp.info(f"[Image Server] Wrist camera {cam.id} resolution: {cam.cap.get(cv2.CAP_PROP_FRAME_HEIGHT)} x {cam.cap.get(cv2.CAP_PROP_FRAME_WIDTH)}")

- elif isinstance(cam, RealSenseCamera):

- logger_mp.info(f"[Image Server] Wrist camera {cam.serial_number} resolution: {cam.img_shape[0]} x {cam.img_shape[1]}")

- else:

- logger_mp.warning("[Image Server] Unknown camera type in wrist_cameras.")

-

- logger_mp.info("[Image Server] Image server has started, waiting for client connections...")

-

-

-

- def _init_performance_metrics(self):

- self.frame_count = 0 # Total frames sent

- self.time_window = 1.0 # Time window for FPS calculation (in seconds)

- self.frame_times = deque() # Timestamps of frames sent within the time window

- self.start_time = time.time() # Start time of the streaming

-

- def _update_performance_metrics(self, current_time):

- # Add current time to frame times deque

- self.frame_times.append(current_time)

- # Remove timestamps outside the time window

- while self.frame_times and self.frame_times[0] < current_time - self.time_window:

- self.frame_times.popleft()

- # Increment frame count

- self.frame_count += 1

-

- def _print_performance_metrics(self, current_time):

- if self.frame_count % 30 == 0:

- elapsed_time = current_time - self.start_time

- real_time_fps = len(self.frame_times) / self.time_window

- logger_mp.info(f"[Image Server] Real-time FPS: {real_time_fps:.2f}, Total frames sent: {self.frame_count}, Elapsed time: {elapsed_time:.2f} sec")

-

- def _close(self):

- for cam in self.head_cameras:

- cam.release()

- for cam in self.wrist_cameras:

- cam.release()

- self.socket.close()

- self.context.term()

- logger_mp.info("[Image Server] The server has been closed.")

-

- def send_process(self):

- try:

- while True:

- head_frames = []

- for cam in self.head_cameras:

- if self.head_camera_type == 'opencv':

- color_image = cam.get_frame()

- if color_image is None:

- logger_mp.error("[Image Server] Head camera frame read is error.")

- break

- elif self.head_camera_type == 'realsense':

- color_image, depth_iamge = cam.get_frame()

- if color_image is None:

- logger_mp.error("[Image Server] Head camera frame read is error.")

- break

- head_frames.append(color_image)

- if len(head_frames) != len(self.head_cameras):

- break

- head_color = cv2.hconcat(head_frames)

-

- if self.wrist_cameras:

- wrist_frames = []

- for cam in self.wrist_cameras:

- if self.wrist_camera_type == 'opencv':

- color_image = cam.get_frame()

- if color_image is None:

- logger_mp.error("[Image Server] Wrist camera frame read is error.")

- break

- elif self.wrist_camera_type == 'realsense':

- color_image, depth_iamge = cam.get_frame()

- if color_image is None:

- logger_mp.error("[Image Server] Wrist camera frame read is error.")

- break

- wrist_frames.append(color_image)

- wrist_color = cv2.hconcat(wrist_frames)

-

- # Concatenate head and wrist frames

- full_color = cv2.hconcat([head_color, wrist_color])

- else:

- full_color = head_color

-

- ret, buffer = cv2.imencode('.jpg', full_color)

- if not ret:

- logger_mp.error("[Image Server] Frame imencode is failed.")

- continue

-

- jpg_bytes = buffer.tobytes()

-

- if self.Unit_Test:

- timestamp = time.time()

- frame_id = self.frame_count

- header = struct.pack('dI', timestamp, frame_id) # 8-byte double, 4-byte unsigned int

- message = header + jpg_bytes

- else:

- message = jpg_bytes

-

- self.socket.send(message)

-

- if self.Unit_Test:

- current_time = time.time()

- self._update_performance_metrics(current_time)

- self._print_performance_metrics(current_time)

-

- except KeyboardInterrupt:

- logger_mp.warning("[Image Server] Interrupted by user.")

- finally:

- self._close()

-

-

-if __name__ == "__main__":

- config = {

- 'fps': 30,

- 'head_camera_type': 'opencv',

- 'head_camera_image_shape': [480, 1280], # Head camera resolution

- 'head_camera_id_numbers': [0],

- 'wrist_camera_type': 'opencv',

- 'wrist_camera_image_shape': [480, 640], # Wrist camera resolution

- 'wrist_camera_id_numbers': [2, 4],

- }

-

- server = ImageServer(config, Unit_Test=False)

- server.send_process()

\ No newline at end of file

diff --git a/teleop/robot_control/robot_arm.py b/teleop/robot_control/robot_arm.py

index acaa8e2..79d6c4c 100644

--- a/teleop/robot_control/robot_arm.py

+++ b/teleop/robot_control/robot_arm.py

@@ -114,7 +114,7 @@ class G1_29_ArmController:

self.all_motor_q = self.get_current_motor_q()

logger_mp.debug(f"Current all body motor state q:\n{self.all_motor_q} \n")

logger_mp.debug(f"Current two arms motor state q:\n{self.get_current_dual_arm_q()}\n")

- logger_mp.info("Lock all joints except two arms...\n")

+ logger_mp.info("Lock all joints except two arms...")

arm_indices = set(member.value for member in G1_29_JointArmIndex)

for id in G1_29_JointIndex:

@@ -134,7 +134,7 @@ class G1_29_ArmController:

self.msg.motor_cmd[id].kp = self.kp_high

self.msg.motor_cmd[id].kd = self.kd_high

self.msg.motor_cmd[id].q = self.all_motor_q[id]

- logger_mp.info("Lock OK!\n")

+ logger_mp.info("Lock OK!")

# initialize publish thread

self.publish_thread = threading.Thread(target=self._ctrl_motor_state)

@@ -142,7 +142,7 @@ class G1_29_ArmController:

self.publish_thread.daemon = True

self.publish_thread.start()

- logger_mp.info("Initialize G1_29_ArmController OK!\n")

+ logger_mp.info("Initialize G1_29_ArmController OK!")

def _subscribe_motor_state(self):

while True:

@@ -402,7 +402,7 @@ class G1_23_ArmController:

self.all_motor_q = self.get_current_motor_q()

logger_mp.info(f"Current all body motor state q:\n{self.all_motor_q} \n")

logger_mp.info(f"Current two arms motor state q:\n{self.get_current_dual_arm_q()}\n")

- logger_mp.info("Lock all joints except two arms...\n")

+ logger_mp.info("Lock all joints except two arms...")

arm_indices = set(member.value for member in G1_23_JointArmIndex)

for id in G1_23_JointIndex:

@@ -422,7 +422,7 @@ class G1_23_ArmController:

self.msg.motor_cmd[id].kp = self.kp_high

self.msg.motor_cmd[id].kd = self.kd_high

self.msg.motor_cmd[id].q = self.all_motor_q[id]

- logger_mp.info("Lock OK!\n")

+ logger_mp.info("Lock OK!")

# initialize publish thread

self.publish_thread = threading.Thread(target=self._ctrl_motor_state)

@@ -430,7 +430,7 @@ class G1_23_ArmController:

self.publish_thread.daemon = True

self.publish_thread.start()

- logger_mp.info("Initialize G1_23_ArmController OK!\n")

+ logger_mp.info("Initialize G1_23_ArmController OK!")

def _subscribe_motor_state(self):

while True:

@@ -682,7 +682,7 @@ class H1_2_ArmController:

self.all_motor_q = self.get_current_motor_q()

logger_mp.info(f"Current all body motor state q:\n{self.all_motor_q} \n")

logger_mp.info(f"Current two arms motor state q:\n{self.get_current_dual_arm_q()}\n")

- logger_mp.info("Lock all joints except two arms...\n")

+ logger_mp.info("Lock all joints except two arms...")

arm_indices = set(member.value for member in H1_2_JointArmIndex)

for id in H1_2_JointIndex:

@@ -702,7 +702,7 @@ class H1_2_ArmController:

self.msg.motor_cmd[id].kp = self.kp_high

self.msg.motor_cmd[id].kd = self.kd_high

self.msg.motor_cmd[id].q = self.all_motor_q[id]

- logger_mp.info("Lock OK!\n")

+ logger_mp.info("Lock OK!")

# initialize publish thread

self.publish_thread = threading.Thread(target=self._ctrl_motor_state)

@@ -710,7 +710,7 @@ class H1_2_ArmController:

self.publish_thread.daemon = True

self.publish_thread.start()

- logger_mp.info("Initialize H1_2_ArmController OK!\n")

+ logger_mp.info("Initialize H1_2_ArmController OK!")

def _subscribe_motor_state(self):

while True:

@@ -964,7 +964,7 @@ class H1_ArmController:

self.all_motor_q = self.get_current_motor_q()

logger_mp.info(f"Current all body motor state q:\n{self.all_motor_q} \n")

logger_mp.info(f"Current two arms motor state q:\n{self.get_current_dual_arm_q()}\n")

- logger_mp.info("Lock all joints except two arms...\n")

+ logger_mp.info("Lock all joints except two arms...")

for id in H1_JointIndex:

if self._Is_weak_motor(id):

@@ -976,7 +976,7 @@ class H1_ArmController:

self.msg.motor_cmd[id].kd = self.kd_high

self.msg.motor_cmd[id].mode = 0x0A

self.msg.motor_cmd[id].q = self.all_motor_q[id]

- logger_mp.info("Lock OK!\n")

+ logger_mp.info("Lock OK!")

# initialize publish thread

self.publish_thread = threading.Thread(target=self._ctrl_motor_state)

@@ -984,7 +984,7 @@ class H1_ArmController:

self.publish_thread.daemon = True

self.publish_thread.start()

- logger_mp.info("Initialize H1_ArmController OK!\n")

+ logger_mp.info("Initialize H1_ArmController OK!")

def _subscribe_motor_state(self):

while True:

diff --git a/teleop/robot_control/robot_arm_ik.py b/teleop/robot_control/robot_arm_ik.py

index 0a94d11..7276081 100644

--- a/teleop/robot_control/robot_arm_ik.py

+++ b/teleop/robot_control/robot_arm_ik.py

@@ -143,13 +143,22 @@ class G1_29_ArmIK:

self.opti.minimize(50 * self.translational_cost + self.rotation_cost + 0.02 * self.regularization_cost + 0.1 * self.smooth_cost)

opts = {

- 'ipopt':{

- 'print_level':0,

- 'max_iter':50,

- 'tol':1e-6

- },

- 'print_time':False,# print or not

- 'calc_lam_p':False # https://github.com/casadi/casadi/wiki/FAQ:-Why-am-I-getting-%22NaN-detected%22in-my-optimization%3F

+ # CasADi-level options

+ 'expand': True,

+ 'detect_simple_bounds': True,

+ 'calc_lam_p': False, # https://github.com/casadi/casadi/wiki/FAQ:-Why-am-I-getting-%22NaN-detected%22in-my-optimization%3F

+ 'print_time':False, # print or not

+ # IPOPT solver options

+ 'ipopt.sb': 'yes', # disable Ipopt's license message

+ 'ipopt.print_level': 0,

+ 'ipopt.max_iter': 30,

+ 'ipopt.tol': 1e-4,

+ 'ipopt.acceptable_tol': 5e-4,

+ 'ipopt.acceptable_iter': 5,

+ 'ipopt.warm_start_init_point': 'yes',

+ 'ipopt.derivative_test': 'none',

+ 'ipopt.jacobian_approximation': 'exact',

+ # 'ipopt.hessian_approximation': 'limited-memory',

}

self.opti.solver("ipopt", opts)

@@ -369,13 +378,22 @@ class G1_23_ArmIK:

self.opti.minimize(50 * self.translational_cost + 0.5 * self.rotation_cost + 0.02 * self.regularization_cost + 0.1 * self.smooth_cost)

opts = {

- 'ipopt':{

- 'print_level':0,

- 'max_iter':50,

- 'tol':1e-6

- },

- 'print_time':False,# print or not

- 'calc_lam_p':False # https://github.com/casadi/casadi/wiki/FAQ:-Why-am-I-getting-%22NaN-detected%22in-my-optimization%3F

+ # CasADi-level options

+ 'expand': True,

+ 'detect_simple_bounds': True,

+ 'calc_lam_p': False, # https://github.com/casadi/casadi/wiki/FAQ:-Why-am-I-getting-%22NaN-detected%22in-my-optimization%3F

+ 'print_time':False, # print or not

+ # IPOPT solver options

+ 'ipopt.sb': 'yes', # disable Ipopt's license message

+ 'ipopt.print_level': 0,

+ 'ipopt.max_iter': 30,

+ 'ipopt.tol': 1e-4,

+ 'ipopt.acceptable_tol': 5e-4,

+ 'ipopt.acceptable_iter': 5,

+ 'ipopt.warm_start_init_point': 'yes',

+ 'ipopt.derivative_test': 'none',

+ 'ipopt.jacobian_approximation': 'exact',

+ # 'ipopt.hessian_approximation': 'limited-memory',

}

self.opti.solver("ipopt", opts)

@@ -620,13 +638,22 @@ class H1_2_ArmIK:

self.opti.minimize(50 * self.translational_cost + self.rotation_cost + 0.02 * self.regularization_cost + 0.1 * self.smooth_cost)

opts = {

- 'ipopt':{

- 'print_level':0,

- 'max_iter':50,

- 'tol':1e-6

- },

- 'print_time':False,# print or not

- 'calc_lam_p':False # https://github.com/casadi/casadi/wiki/FAQ:-Why-am-I-getting-%22NaN-detected%22in-my-optimization%3F

+ # CasADi-level options

+ 'expand': True,

+ 'detect_simple_bounds': True,

+ 'calc_lam_p': False, # https://github.com/casadi/casadi/wiki/FAQ:-Why-am-I-getting-%22NaN-detected%22in-my-optimization%3F

+ 'print_time':False, # print or not

+ # IPOPT solver options

+ 'ipopt.sb': 'yes', # disable Ipopt's license message

+ 'ipopt.print_level': 0,

+ 'ipopt.max_iter': 30,

+ 'ipopt.tol': 1e-4,

+ 'ipopt.acceptable_tol': 5e-4,

+ 'ipopt.acceptable_iter': 5,

+ 'ipopt.warm_start_init_point': 'yes',

+ 'ipopt.derivative_test': 'none',

+ 'ipopt.jacobian_approximation': 'exact',

+ # 'ipopt.hessian_approximation': 'limited-memory',

}

self.opti.solver("ipopt", opts)

@@ -874,13 +901,22 @@ class H1_ArmIK:

self.opti.minimize(50 * self.translational_cost + 0.5 * self.rotation_cost + 0.02 * self.regularization_cost + 0.1 * self.smooth_cost)

opts = {

- 'ipopt':{

- 'print_level':0,

- 'max_iter':50,

- 'tol':1e-6

- },

- 'print_time':False,# print or not

- 'calc_lam_p':False # https://github.com/casadi/casadi/wiki/FAQ:-Why-am-I-getting-%22NaN-detected%22in-my-optimization%3F

+ # CasADi-level options

+ 'expand': True,

+ 'detect_simple_bounds': True,

+ 'calc_lam_p': False, # https://github.com/casadi/casadi/wiki/FAQ:-Why-am-I-getting-%22NaN-detected%22in-my-optimization%3F

+ 'print_time':False, # print or not

+ # IPOPT solver options

+ 'ipopt.sb': 'yes', # disable Ipopt's license message

+ 'ipopt.print_level': 0,

+ 'ipopt.max_iter': 30,

+ 'ipopt.tol': 1e-4,

+ 'ipopt.acceptable_tol': 5e-4,

+ 'ipopt.acceptable_iter': 5,

+ 'ipopt.warm_start_init_point': 'yes',

+ 'ipopt.derivative_test': 'none',

+ 'ipopt.jacobian_approximation': 'exact',

+ # 'ipopt.hessian_approximation': 'limited-memory',

}

self.opti.solver("ipopt", opts)

diff --git a/teleop/robot_control/robot_hand_brainco.py b/teleop/robot_control/robot_hand_brainco.py

index a37b554..9625d5a 100644

--- a/teleop/robot_control/robot_hand_brainco.py

+++ b/teleop/robot_control/robot_hand_brainco.py

@@ -67,7 +67,7 @@ class Brainco_Controller:

hand_control_process.daemon = True

hand_control_process.start()

- logger_mp.info("Initialize brainco_Controller OK!\n")

+ logger_mp.info("Initialize brainco_Controller OK!")

def _subscribe_hand_state(self):

while True:

diff --git a/teleop/robot_control/robot_hand_inspire.py b/teleop/robot_control/robot_hand_inspire.py

index 3158b99..367728d 100644

--- a/teleop/robot_control/robot_hand_inspire.py

+++ b/teleop/robot_control/robot_hand_inspire.py

@@ -1,7 +1,6 @@

from unitree_sdk2py.core.channel import ChannelPublisher, ChannelSubscriber, ChannelFactoryInitialize # dds

from unitree_sdk2py.idl.unitree_go.msg.dds_ import MotorCmds_, MotorStates_ # idl

from unitree_sdk2py.idl.default import unitree_go_msg_dds__MotorCmd_

-

from teleop.robot_control.hand_retargeting import HandRetargeting, HandType

import numpy as np

from enum import IntEnum

@@ -13,13 +12,13 @@ import logging_mp

logger_mp = logging_mp.get_logger(__name__)

Inspire_Num_Motors = 6

-kTopicInspireCommand = "rt/inspire/cmd"

-kTopicInspireState = "rt/inspire/state"

+kTopicInspireDFXCommand = "rt/inspire/cmd"

+kTopicInspireDFXState = "rt/inspire/state"

-class Inspire_Controller:

+class Inspire_Controller_DFX:

def __init__(self, left_hand_array, right_hand_array, dual_hand_data_lock = None, dual_hand_state_array = None,

dual_hand_action_array = None, fps = 100.0, Unit_Test = False, simulation_mode = False):

- logger_mp.info("Initialize Inspire_Controller...")

+ logger_mp.info("Initialize Inspire_Controller_DFX...")

self.fps = fps

self.Unit_Test = Unit_Test

self.simulation_mode = simulation_mode

@@ -34,10 +33,10 @@ class Inspire_Controller:

ChannelFactoryInitialize(0)

# initialize handcmd publisher and handstate subscriber

- self.HandCmb_publisher = ChannelPublisher(kTopicInspireCommand, MotorCmds_)

+ self.HandCmb_publisher = ChannelPublisher(kTopicInspireDFXCommand, MotorCmds_)

self.HandCmb_publisher.Init()

- self.HandState_subscriber = ChannelSubscriber(kTopicInspireState, MotorStates_)

+ self.HandState_subscriber = ChannelSubscriber(kTopicInspireDFXState, MotorStates_)

self.HandState_subscriber.Init()

# Shared Arrays for hand states

@@ -53,15 +52,15 @@ class Inspire_Controller:

if any(self.right_hand_state_array): # any(self.left_hand_state_array) and

break